Solving Poisson PDE Systems (https://

with boundary conditions

where

using NeuralPDE

using Lux

using Optimization

using OptimizationOptimJL

using ModelingToolkit

using DomainSets

using LineSearches

using Plots2D PDE

@parameters x y

@variables u(..)

Dxx = Differential(x)^2

Dyy = Differential(y)^2

eq = Dxx(u(x, y)) + Dyy(u(x, y)) ~ -sinpi(x) * sinpi(y)Differential(x, 2)(u(x, y)) + Differential(y, 2)(u(x, y)) ~ -sinpi(y)*sinpi(x)Boundary conditions

bcs = [

u(0, y) ~ 0.0,

u(1, y) ~ 0.0,

u(x, 0) ~ 0.0,

u(x, 1) ~ 0.0

]4-element Vector{Symbolics.Equation}:

u(0, y) ~ 0.0

u(1, y) ~ 0.0

u(x, 0) ~ 0.0

u(x, 1) ~ 0.0Space domains

domains = [

x ∈ DomainSets.Interval(0.0, 1.0),

y ∈ DomainSets.Interval(0.0, 1.0)

]2-element Vector{Symbolics.VarDomainPairing}:

Symbolics.VarDomainPairing(x, 0.0 .. 1.0)

Symbolics.VarDomainPairing(y, 0.0 .. 1.0)Build a neural network for the PDE solver.

Input: 2 dimensions.

Hidden layers: 16 neurons * 2 layers.

Output: single output u(x, y)

dim = 2

chain = Lux.Chain(Dense(dim, 16, Lux.σ), Dense(16, 16, Lux.σ), Dense(16, 1))Chain(

layer_1 = Dense(2 => 16, σ), # 48 parameters

layer_2 = Dense(16 => 16, σ), # 272 parameters

layer_3 = Dense(16 => 1), # 17 parameters

) # Total: 337 parameters,

# plus 0 states.Discretization method usesPhysicsInformedNN() (PINN).

dx = 0.05

discretization = PhysicsInformedNN(chain, QuadratureTraining(; batch = 200, abstol = 1e-6, reltol = 1e-6))NeuralPDE.PhysicsInformedNN{Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}, NeuralPDE.QuadratureTraining{Float64, Integrals.CubatureJLh}, Nothing, Nothing, NeuralPDE.Phi{LuxCore.StatefulLuxLayerImpl.StatefulLuxLayer{Val{true}, Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}, Nothing, @NamedTuple{layer_1::@NamedTuple{}, layer_2::@NamedTuple{}, layer_3::@NamedTuple{}}}}, typeof(NeuralPDE.numeric_derivative), Bool, Nothing, Nothing, Nothing, Base.RefValue{Int64}, Base.Pairs{Symbol, Union{}, Nothing, @NamedTuple{}}}(Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}((layer_1 = Dense(2 => 16, σ), layer_2 = Dense(16 => 16, σ), layer_3 = Dense(16 => 1)), nothing), NeuralPDE.QuadratureTraining{Float64, Integrals.CubatureJLh}(Integrals.CubatureJLh(0), 1.0e-6, 1.0e-6, 1000, 200), nothing, nothing, NeuralPDE.Phi{LuxCore.StatefulLuxLayerImpl.StatefulLuxLayer{Val{true}, Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}, Nothing, @NamedTuple{layer_1::@NamedTuple{}, layer_2::@NamedTuple{}, layer_3::@NamedTuple{}}}}(LuxCore.StatefulLuxLayerImpl.StatefulLuxLayer{Val{true}, Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}, Nothing, @NamedTuple{layer_1::@NamedTuple{}, layer_2::@NamedTuple{}, layer_3::@NamedTuple{}}}(Lux.Chain{@NamedTuple{layer_1::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_2::Lux.Dense{typeof(NNlib.σ), Int64, Int64, Nothing, Nothing, Static.True}, layer_3::Lux.Dense{typeof(identity), Int64, Int64, Nothing, Nothing, Static.True}}, Nothing}((layer_1 = Dense(2 => 16, σ), layer_2 = Dense(16 => 16, σ), layer_3 = Dense(16 => 1)), nothing), nothing, (layer_1 = NamedTuple(), layer_2 = NamedTuple(), layer_3 = NamedTuple()), nothing, Val{true}())), NeuralPDE.numeric_derivative, false, nothing, nothing, nothing, NeuralPDE.LogOptions(50), Base.RefValue{Int64}(1), true, false, Base.Pairs{Symbol, Union{}, Nothing, @NamedTuple{}}())Build the PDE system and discretize it.

@named pde_system = PDESystem(eq, bcs, domains, [x, y], [u(x, y)])

prob = discretize(pde_system, discretization)OptimizationProblem. In-place: true

u0: ComponentVector{Float64}(layer_1 = (weight = [-0.31745225191116333 1.0122241973876953; 0.011934411711990833 0.2161230444908142; … ; 0.22904399037361145 1.022088646888733; 0.5701216459274292 0.6451277732849121], bias = [-0.3104079067707062, -0.0605483315885067, 0.47486981749534607, -0.11172733455896378, 0.4817638397216797, 0.05471503734588623, 0.06654911488294601, -0.3689786195755005, -0.3756614327430725, -0.2047443389892578, 0.24614012241363525, -0.3279259204864502, -0.3208068907260895, -0.5949823260307312, -0.467618852853775, -0.6274517774581909]), layer_2 = (weight = [-0.33401674032211304 -0.12918463349342346 … 0.055676452815532684 -0.2903096377849579; -0.10851673781871796 0.43142396211624146 … -0.13456669449806213 0.06107751652598381; … ; -0.3072296679019928 0.38943400979042053 … 0.3907761573791504 -0.2676927447319031; 0.19234952330589294 0.004004406277090311 … -0.35999003052711487 0.16301694512367249], bias = [-0.21017009019851685, 0.18975132703781128, -0.2442554235458374, -0.0028389394283294678, -0.11535605788230896, 0.21133548021316528, 0.13412952423095703, -0.19781053066253662, -0.09522184729576111, -0.18691986799240112, 0.08336251974105835, 0.040741950273513794, -0.22300025820732117, -0.04595354199409485, 0.12662270665168762, 0.09781265258789062]), layer_3 = (weight = [-0.36901476979255676 -0.05513883754611015 … -0.06883803755044937 -0.2452363669872284], bias = [-0.07034909725189209]))Callback function to record the loss

lossrecord = Float64[]

callback = function (p, l)

push!(lossrecord, l)

return false

end#2 (generic function with 1 method)Solve the problem. You can increase maxiters to get a better solution, but it will take more time.

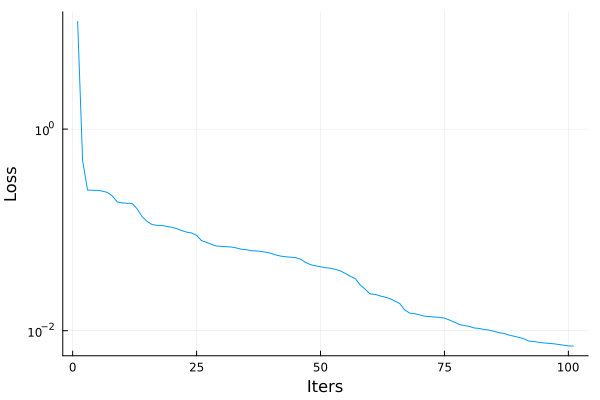

opt = OptimizationOptimJL.LBFGS(linesearch = LineSearches.BackTracking())

@time res = Optimization.solve(prob, opt, callback = callback, maxiters=100)319.829322 seconds (1.15 G allocations: 65.813 GiB, 2.81% gc time, 37.06% compilation time: <1% of which was recompilation)

retcode: MaxIters

u: ComponentVector{Float64}(layer_1 = (weight = [-0.9088068425168931 1.6157428625534818; 0.2188520183750844 0.21067543097161007; … ; 0.06301851489179061 0.5235684496438249; 0.7672863436136792 1.602080918114066], bias = [-0.9148205371443152, -0.07696046285512526, 0.2808871078016997, 0.25989583266646304, 0.7466425924615774, -0.04873462136763978, 1.1668642150697224, -0.22234656315865411, -0.6028818319799666, -0.10711662343726183, -0.8254531370850557, -0.1536507785577804, -0.32704304772233594, -0.7468470244951783, -1.1318419960272228, -0.8148936306662932]), layer_2 = (weight = [-0.694244226817537 -0.0808191302552739 … -0.11738071549454351 -0.2402238892588016; -0.08763915040628448 0.4769051257035782 … -0.140196862166043 -0.059136755489981076; … ; -0.2795265918831065 0.4147718620526298 … 0.3830448240074974 -0.4075387222769357; 0.25022189388350835 0.06705963890169003 … -0.42667393122438796 -0.08090379855398999], bias = [-0.012383448865768856, 0.29490550279820726, -0.3036732546606571, 0.24507227791953654, -0.2098535612238025, -1.0447456486608184, -0.4784322708716125, -0.08174506099006258, -0.11616674337301226, -0.2071503647230429, -0.17987484384150415, -0.20359219632704978, -0.4894677827401147, -0.16844670151273142, 0.19479123294556297, 0.29174683588241807]), layer_3 = (weight = [-0.967115627926398 0.4505673011745413 … 0.5785588416659191 0.37829601667417445], bias = [0.47912642962809654]))plot(lossrecord, xlabel="Iters", yscale=:log10, ylabel="Loss", lab=false)

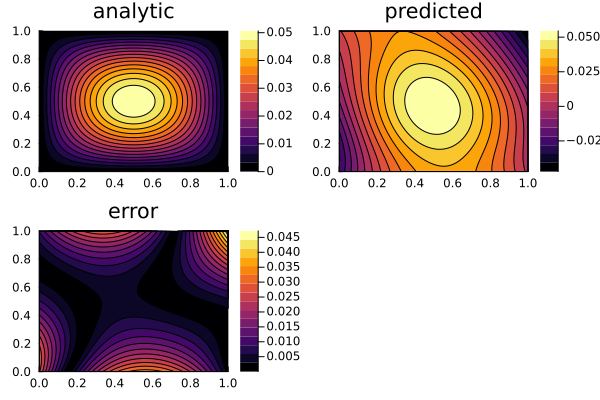

Plot the predicted solution of the PDE and compare it with the analytical solution to see the relative error.

xs, ys = [DomainSets.infimum(d.domain):dx/10:DomainSets.supremum(d.domain) for d in domains]

analytic_sol_func(x,y) = (sinpi(x)*sinpi(y))/(2pi^2)

phi = discretization.phi

u_predict = reshape([first(phi([x, y], res.u)) for x in xs for y in ys], (length(xs), length(ys)))

u_real = reshape([analytic_sol_func(x, y) for x in xs for y in ys], (length(xs), length(ys)))

diff_u = abs.(u_predict .- u_real)201×201 Matrix{Float64}:

0.0271773 0.0261987 0.025225 … 0.00296956 0.00233656 0.00169989

0.027355 0.0263821 0.025414 0.00279449 0.00217089 0.00154366

0.0275216 0.0265546 0.0255922 0.00262754 0.00201334 0.00139554

0.0276773 0.0267161 0.0257597 0.00246854 0.00186374 0.00125538

0.027822 0.0268668 0.0259164 0.00231732 0.00172193 0.00112301

0.0279558 0.0270067 0.0260622 … 0.00217374 0.00158774 0.000998254

0.0280786 0.0271356 0.0261974 0.00203761 0.001461 0.000880944

0.0281904 0.0272538 0.0263218 0.00190876 0.00134153 0.000770897

0.0282914 0.0273611 0.0264355 0.00178703 0.00122917 0.000667932

0.0283815 0.0274577 0.0265385 0.00167222 0.00112371 0.000571861

⋮ ⋱ ⋮

0.0073499 0.00775757 0.00816487 0.0417275 0.042541 0.0433555

0.00757013 0.00798627 0.00840196 0.0421865 0.0430118 0.0438381

0.00779408 0.00821877 0.00864292 0.0426443 0.0434815 0.0443196

0.00802183 0.00845513 0.0088878 … 0.0431009 0.04395 0.0447999

0.00825343 0.00869541 0.00913667 0.0435563 0.0444172 0.0452791

0.00848894 0.00893967 0.00938958 0.0440104 0.0448832 0.0457569

0.00872844 0.00918797 0.00964658 0.044463 0.0453477 0.0462333

0.00897197 0.00944035 0.00990773 0.0449142 0.0458108 0.0467083

0.00921959 0.00969688 0.0101731 … 0.0453638 0.0462724 0.0471818p1 = plot(xs, ys, u_real, linetype=:contourf, title = "analytic");

p2 = plot(xs, ys, u_predict, linetype=:contourf, title = "predicted");

p3 = plot(xs, ys, diff_u, linetype=:contourf, title = "error");

plot(p1, p2, p3)

This notebook was generated using Literate.jl.